iOS VAD library is designed to process audio in real-time and identify presence of human speech in audio samples that contain a mixture of speech and noise. The VAD functionality operates offline, performing all processing tasks directly on the mobile device.

The repository offers three distinct models for voice activity detection:

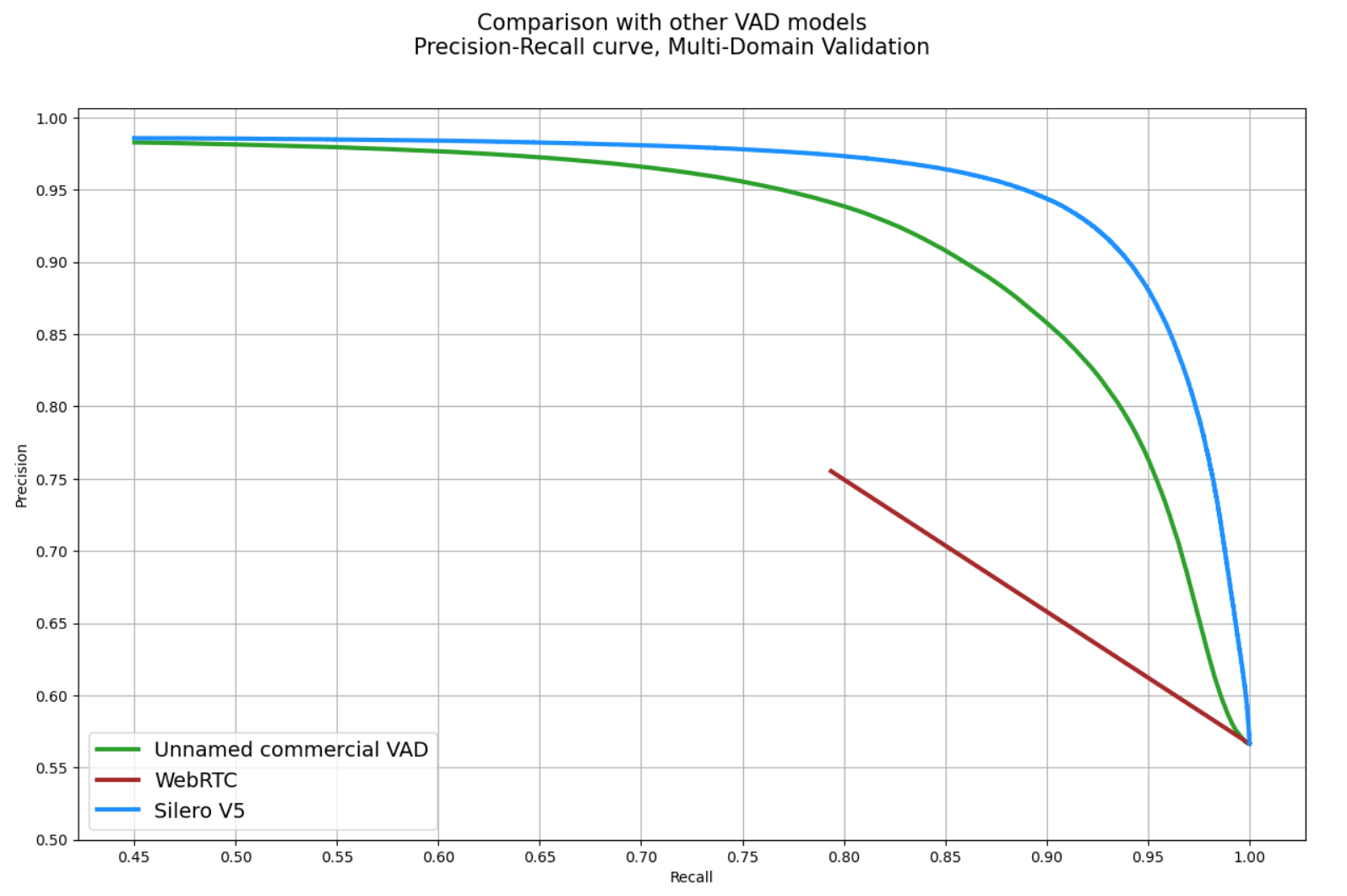

WebRTC VAD [1] is based on a Gaussian Mixture Model (GMM) which is known for its exceptional speed and effectiveness in distinguishing between noise and silence. However, it may demonstrate relatively lower accuracy when it comes to differentiating speech from background noise.

Silero VAD [2] is based on a Deep Neural Networks (DNN) and utilizes the ONNX Runtime Mobile for execution. It provides exceptional accuracy and achieves processing time that is very close to WebRTC VAD.

Yamnet VAD [3] is based on a Deep Neural Networks (DNN) and employs the Mobilenet_v1 depthwise-separable convolution architecture. For execution utilizes the Tensorflow Lite runtime. Yamnet VAD can predict 522 audio event classes (such as speech, music, animal sounds and etc). It was trained on AudioSet-YouTube corpus.

WebRTC VAD is lightweight (only 158 KB) and provides exceptional speed in audio processing, but it may exhibit lower accuracy compared to DNN models. WebRTC VAD can be invaluable in scenarios where a small and fast library is necessary and where sacrificing accuracy is acceptable. In situations where high accuracy is critical, models like Silero VAD and Yamnet VAD are more preferable. For more detailed insights and a comprehensive comparison between DNN and GMM, refer to the following comparison Silero VAD vs WebRTC VAD.

WebRTC VAD library only accepts 16-bit Mono PCM audio stream and can work with next Sample Rates, Frame Sizes and Modes.

|

|

Recommended parameters for WebRTC VAD:

- Sample Rate (required) - 16KHz - The sample rate of the audio input.

- Frame Size (required) - 320 - The frame size of the audio input.

- Mode (required) - VERY_AGGRESSIVE - The confidence mode of the VAD model.

- Silence Duration (optional) - 300ms - The minimum duration in milliseconds for silence segments.

- Speech Duration (optional) - 50ms - The minimum duration in milliseconds for speech segments.

WebRTC VAD can identify speech in short audio frames, returning results for each frame. By utilizing parameters such as silenceDurationMs and speechDurationMs, you can enhance the capability of VAD, enabling the detection of prolonged utterances while minimizing false positive results during pauses between sentences.

Swift example:

// TODOObjective-C example:

// TODOAn example of how to detect speech in an audio file:

// TODOSilero VAD library only accepts 16-bit Mono PCM audio stream and can work with next Sample Rates, Frame Sizes and Modes.

|

|

Recommended parameters for Silero VAD:

- Context (required) - The Context is required to facilitate reading the model file from the Android file system.

- Sample Rate (required) - 16KHz - The sample rate of the audio input.

- Frame Size (required) - 512 - The frame size of the audio input.

- Mode (required) - NORMAL - The confidence mode of the VAD model.

- Silence Duration (optional) - 300ms - The minimum duration in milliseconds for silence segments.

- Speech Duration (optional) - 50ms - The minimum duration in milliseconds for speech segments.

Silero VAD can identify speech in short audio frames, returning results for each frame. By utilizing parameters such as silenceDurationMs and speechDurationMs, you can enhance the capability of VAD, enabling the detection of prolonged utterances while minimizing false positive results during pauses between sentences.

Swift example:

// TODOObjective-C example:

// TODOYamnet VAD library only accepts 16-bit Mono PCM audio stream and can work with next Sample Rates, Frame Sizes and Modes.

|

|

Recommended parameters for Yamnet VAD:

- Context (required) - The Context is required to facilitate reading the model file from the Android file system.

- Sample Rate (required) - 16KHz - The sample rate of the audio input.

- Frame Size (required) - 243 - The frame size of the audio input.

- Mode (required) - NORMAL - The confidence mode of the VAD model.

- Silence Duration (optional) - 30ms - The minimum duration in milliseconds for silence segments.

- Speech Duration (optional) - 30ms - The minimum duration in milliseconds for speech segments.

Yamnet VAD can identify 521 audio event classes (such as speech, music, animal sounds and etc) in small audio frames. By utilizing parameters such as silenceDurationMs and speechDurationMs and specifying sound category (ex. classifyAudio("Speech", audioData)), you can enhance the capability of VAD, enabling the detection of prolonged utterances while minimizing false positive results during pauses between sentences.

Swift example:

// TODOObjective-C example:

// TODOWebRTC VAD - iOS XX and later.

Silero VAD - iOS XX and later.

Yamnet VAD - iOS XX and later.

CocoaPods is the only supported build configuration, so just add the dependency to your project Podfile file:

- Add it in your Podfile at the end of repositories:

// TODO- Add one dependency from list below:

// TODO// TODO// TODO[1] WebRTC VAD - Voice Activity Detector from Google which is reportedly one of the best available: it's fast, modern and free. This algorithm has found wide adoption and has recently become one of the gold-standards for delay-sensitive scenarios like web-based interaction.

[2] Silero VAD - pre-trained enterprise-grade Voice Activity Detector, Number Detector and Language Classifier [email protected].

[3] Yamnet VAD - YAMNet is a pretrained deep neural network that can predicts 522 audio event classes based on the AudioSet-YouTube corpus, employing the Mobilenet_v1 depthwise-separable convolution architecture.

Bao Chuquan 2024 (c) MIT License