diff --git a/go.mod b/go.mod

index c621f4eb..e88940e8 100644

--- a/go.mod

+++ b/go.mod

@@ -58,6 +58,7 @@ require (

github.com/cespare/xxhash/v2 v2.2.0 // indirect

github.com/chenzhuoyu/base64x v0.0.0-20221115062448-fe3a3abad311 // indirect

github.com/dustin/go-humanize v1.0.1 // indirect

+ github.com/fatih/color v1.15.0 // indirect

github.com/gabriel-vasile/mimetype v1.4.2 // indirect

github.com/gin-contrib/sse v0.1.0 // indirect

github.com/glebarez/go-sqlite v1.20.3 // indirect

@@ -67,6 +68,7 @@ require (

github.com/go-shiori/dom v0.0.0-20230515143342-73569d674e1c // indirect

github.com/go-shiori/go-readability v0.0.0-20230421032831-c66949dfc0ad // indirect

github.com/goccy/go-json v0.10.2 // indirect

+ github.com/goccy/go-yaml v1.11.2 // indirect

github.com/gogs/chardet v0.0.0-20211120154057-b7413eaefb8f // indirect

github.com/golang/protobuf v1.5.3 // indirect

github.com/google/go-cmp v0.5.9 // indirect

@@ -83,6 +85,7 @@ require (

github.com/jinzhu/now v1.1.5 // indirect

github.com/json-iterator/go v1.1.12 // indirect

github.com/klauspost/compress v1.16.0 // indirect

+ github.com/mattn/go-colorable v0.1.13 // indirect

github.com/mattn/go-isatty v0.0.19 // indirect

github.com/mattn/go-runewidth v0.0.9 // indirect

github.com/matttproud/golang_protobuf_extensions v1.0.4 // indirect

@@ -105,6 +108,7 @@ require (

github.com/twitchyliquid64/golang-asm v0.15.1 // indirect

golang.org/x/text v0.13.0 // indirect

golang.org/x/time v0.3.0 // indirect

+ golang.org/x/xerrors v0.0.0-20220907171357-04be3eba64a2 // indirect

google.golang.org/protobuf v1.30.0 // indirect

gopkg.in/ini.v1 v1.67.0 // indirect

gopkg.in/tomb.v1 v1.0.0-20141024135613-dd632973f1e7 // indirect

diff --git a/go.sum b/go.sum

index 87ee0a14..252e2ab5 100644

--- a/go.sum

+++ b/go.sum

@@ -95,6 +95,8 @@ github.com/denisbrodbeck/machineid v1.0.1/go.mod h1:dJUwb7PTidGDeYyUBmXZ2GphQBbj

github.com/denisenkom/go-mssqldb v0.12.0 h1:VtrkII767ttSPNRfFekePK3sctr+joXgO58stqQbtUA=

github.com/dustin/go-humanize v1.0.1 h1:GzkhY7T5VNhEkwH0PVJgjz+fX1rhBrR7pRT3mDkpeCY=

github.com/dustin/go-humanize v1.0.1/go.mod h1:Mu1zIs6XwVuF/gI1OepvI0qD18qycQx+mFykh5fBlto=

+github.com/fatih/color v1.15.0 h1:kOqh6YHBtK8aywxGerMG2Eq3H6Qgoqeo13Bk2Mv/nBs=

+github.com/fatih/color v1.15.0/go.mod h1:0h5ZqXfHYED7Bhv2ZJamyIOUej9KtShiJESRwBDUSsw=

github.com/fsnotify/fsnotify v1.4.7/go.mod h1:jwhsz4b93w/PPRr/qN1Yymfu8t87LnFCMoQvtojpjFo=

github.com/fsnotify/fsnotify v1.4.9/go.mod h1:znqG4EE+3YCdAaPaxE2ZRY/06pZUdp0tY4IgpuI1SZQ=

github.com/fsnotify/fsnotify v1.6.0 h1:n+5WquG0fcWoWp6xPWfHdbskMCQaFnG6PfBrh1Ky4HY=

@@ -119,6 +121,7 @@ github.com/go-gormigrate/gormigrate/v2 v2.0.2 h1:YV4Lc5yMQX8ahVW0ENPq6sPhrhdkGuk

github.com/go-gormigrate/gormigrate/v2 v2.0.2/go.mod h1:vld36QpBTfTzLealsHsmQQJK5lSwJt6wiORv+oFX8/I=

github.com/go-kit/log v0.1.0/go.mod h1:zbhenjAZHb184qTLMA9ZjW7ThYL0H2mk7Q6pNt4vbaY=

github.com/go-logfmt/logfmt v0.5.0/go.mod h1:wCYkCAKZfumFQihp8CzCvQ3paCTfi41vtzG1KdI/P7A=

+github.com/go-logfmt/logfmt v0.5.1/go.mod h1:WYhtIu8zTZfxdn5+rREduYbwxfcBr/Vr6KEVveWlfTs=

github.com/go-logr/logr v1.2.4 h1:g01GSCwiDw2xSZfjJ2/T9M+S6pFdcNtFYsp+Y43HYDQ=

github.com/go-playground/assert/v2 v2.0.1/go.mod h1:VDjEfimB/XKnb+ZQfWdccd7VUvScMdVu0Titje2rxJ4=

github.com/go-playground/assert/v2 v2.2.0 h1:JvknZsQTYeFEAhQwI4qEt9cyV5ONwRHC+lYKSsYSR8s=

@@ -142,6 +145,8 @@ github.com/go-task/slim-sprig v0.0.0-20230315185526-52ccab3ef572 h1:tfuBGBXKqDEe

github.com/goccy/go-json v0.9.7/go.mod h1:6MelG93GURQebXPDq3khkgXZkazVtN9CRI+MGFi0w8I=

github.com/goccy/go-json v0.10.2 h1:CrxCmQqYDkv1z7lO7Wbh2HN93uovUHgrECaO5ZrCXAU=

github.com/goccy/go-json v0.10.2/go.mod h1:6MelG93GURQebXPDq3khkgXZkazVtN9CRI+MGFi0w8I=

+github.com/goccy/go-yaml v1.11.2 h1:joq77SxuyIs9zzxEjgyLBugMQ9NEgTWxXfz2wVqwAaQ=

+github.com/goccy/go-yaml v1.11.2/go.mod h1:wKnAMd44+9JAAnGQpWVEgBzGt3YuTaQ4uXoHvE4m7WU=

github.com/gofrs/uuid v4.0.0+incompatible h1:1SD/1F5pU8p29ybwgQSwpQk+mwdRrXCYuPhW6m+TnJw=

github.com/gofrs/uuid v4.0.0+incompatible/go.mod h1:b2aQJv3Z4Fp6yNu3cdSllBxTCLRxnplIgP/c0N/04lM=

github.com/gogs/chardet v0.0.0-20211120154057-b7413eaefb8f h1:3BSP1Tbs2djlpprl7wCLuiqMaUh5SJkkzI2gDs+FgLs=

@@ -239,10 +244,12 @@ github.com/jinzhu/now v1.1.5/go.mod h1:d3SSVoowX0Lcu0IBviAWJpolVfI5UJVZZ7cO71lE/

github.com/jmespath/go-jmespath v0.4.0/go.mod h1:T8mJZnbsbmF+m6zOOFylbeCJqk5+pHWvzYPziyZiYoo=

github.com/jmespath/go-jmespath/internal/testify v1.5.1/go.mod h1:L3OGu8Wl2/fWfCI6z80xFu9LTZmf1ZRjMHUOPmWr69U=

github.com/joho/godotenv v1.4.0 h1:3l4+N6zfMWnkbPEXKng2o2/MR5mSwTrBih4ZEkkz1lg=

+github.com/jpillora/backoff v1.0.0/go.mod h1:J/6gKK9jxlEcS3zixgDgUAsiuZ7yrSoa/FX5e0EB2j4=

github.com/jrick/logrotate v1.0.0/go.mod h1:LNinyqDIJnpAur+b8yyulnQw/wDuN1+BYKlTRt3OuAQ=

github.com/json-iterator/go v1.1.10/go.mod h1:KdQUCv79m/52Kvf8AW2vK1V8akMuk1QjK/uOdHXbAo4=

github.com/json-iterator/go v1.1.12 h1:PV8peI4a0ysnczrg+LtxykD8LfKY9ML6u2jnxaEnrnM=

github.com/json-iterator/go v1.1.12/go.mod h1:e30LSqwooZae/UwlEbR2852Gd8hjQvJoHmT4TnhNGBo=

+github.com/julienschmidt/httprouter v1.3.0/go.mod h1:JR6WtHb+2LUe8TCKY3cZOxFyyO8IZAc4RVcycCCAKdM=

github.com/kisielk/gotool v1.0.0/go.mod h1:XhKaO+MFFWcvkIS/tQcRk01m1F5IRFswLeQ+oQHNcck=

github.com/kkdai/bstream v0.0.0-20161212061736-f391b8402d23/go.mod h1:J+Gs4SYgM6CZQHDETBtE9HaSEkGmuNXF86RwHhHUvq4=

github.com/klauspost/compress v1.16.0 h1:iULayQNOReoYUe+1qtKOqw9CwJv3aNQu8ivo7lw1HU4=

@@ -277,10 +284,13 @@ github.com/lib/pq v1.10.2 h1:AqzbZs4ZoCBp+GtejcpCpcxM3zlSMx29dXbUSeVtJb8=

github.com/lib/pq v1.10.2/go.mod h1:AlVN5x4E4T544tWzH6hKfbfQvm3HdbOxrmggDNAPY9o=

github.com/mattn/go-colorable v0.1.1/go.mod h1:FuOcm+DKB9mbwrcAfNl7/TZVBZ6rcnceauSikq3lYCQ=

github.com/mattn/go-colorable v0.1.6/go.mod h1:u6P/XSegPjTcexA+o6vUJrdnUu04hMope9wVRipJSqc=

+github.com/mattn/go-colorable v0.1.13 h1:fFA4WZxdEF4tXPZVKMLwD8oUnCTTo08duU7wxecdEvA=

+github.com/mattn/go-colorable v0.1.13/go.mod h1:7S9/ev0klgBDR4GtXTXX8a3vIGJpMovkB8vQcUbaXHg=

github.com/mattn/go-isatty v0.0.5/go.mod h1:Iq45c/XA43vh69/j3iqttzPXn0bhXyGjM0Hdxcsrc5s=

github.com/mattn/go-isatty v0.0.7/go.mod h1:Iq45c/XA43vh69/j3iqttzPXn0bhXyGjM0Hdxcsrc5s=

github.com/mattn/go-isatty v0.0.12/go.mod h1:cbi8OIDigv2wuxKPP5vlRcQ1OAZbq2CE4Kysco4FUpU=

github.com/mattn/go-isatty v0.0.14/go.mod h1:7GGIvUiUoEMVVmxf/4nioHXj79iQHKdU27kJ6hsGG94=

+github.com/mattn/go-isatty v0.0.16/go.mod h1:kYGgaQfpe5nmfYZH+SKPsOc2e4SrIfOl2e/yFXSvRLM=

github.com/mattn/go-isatty v0.0.19 h1:JITubQf0MOLdlGRuRq+jtsDlekdYPia9ZFsB8h/APPA=

github.com/mattn/go-isatty v0.0.19/go.mod h1:W+V8PltTTMOvKvAeJH7IuucS94S2C6jfK/D7dTCTo3Y=

github.com/mattn/go-runewidth v0.0.9 h1:Lm995f3rfxdpd6TSmuVCHVb/QhupuXlYr8sCI/QdE+0=

@@ -308,6 +318,7 @@ github.com/modern-go/concurrent v0.0.0-20180306012644-bacd9c7ef1dd/go.mod h1:6dJ

github.com/modern-go/reflect2 v0.0.0-20180701023420-4b7aa43c6742/go.mod h1:bx2lNnkwVCuqBIxFjflWJWanXIb3RllmbCylyMrvgv0=

github.com/modern-go/reflect2 v1.0.2 h1:xBagoLtFs94CBntxluKeaWgTMpvLxC4ur3nMaC9Gz0M=

github.com/modern-go/reflect2 v1.0.2/go.mod h1:yWuevngMOJpCy52FWWMvUC8ws7m/LJsjYzDa0/r8luk=

+github.com/mwitkow/go-conntrack v0.0.0-20190716064945-2f068394615f/go.mod h1:qRWi+5nqEBWmkhHvq77mSJWrCKwh8bxhgT7d/eI7P4U=

github.com/nxadm/tail v1.4.4/go.mod h1:kenIhsEOeOJmVchQTgglprH7qJGnHDVpk1VPCcaMI8A=

github.com/nxadm/tail v1.4.8 h1:nPr65rt6Y5JFSKQO7qToXr7pePgD6Gwiw05lkbyAQTE=

github.com/nxadm/tail v1.4.8/go.mod h1:+ncqLTQzXmGhMZNUePPaPqPvBxHAIsmXswZKocGu+AU=

@@ -332,6 +343,7 @@ github.com/pingcap/errors v0.11.4 h1:lFuQV/oaUMGcD2tqt+01ROSmJs75VG1ToEOkZIZ4nE4

github.com/pkg/diff v0.0.0-20210226163009-20ebb0f2a09e/go.mod h1:pJLUxLENpZxwdsKMEsNbx1VGcRFpLqf3715MtcvvzbA=

github.com/pkg/errors v0.8.1/go.mod h1:bwawxfHBFNV+L2hUp1rHADufV3IMtnDRdf1r5NINEl0=

github.com/pkg/errors v0.9.1 h1:FEBLx1zS214owpjy7qsBeixbURkuhQAwrK5UwLGTwt4=

+github.com/pkg/errors v0.9.1/go.mod h1:bwawxfHBFNV+L2hUp1rHADufV3IMtnDRdf1r5NINEl0=

github.com/pmezard/go-difflib v1.0.0 h1:4DBwDE0NGyQoBHbLQYPwSUPoCMWR5BEzIk/f1lZbAQM=

github.com/pmezard/go-difflib v1.0.0/go.mod h1:iKH77koFhYxTK1pcRnkKkqfTogsbg7gZNVY4sRDYZ/4=

github.com/prometheus/client_golang v1.16.0 h1:yk/hx9hDbrGHovbci4BY+pRMfSuuat626eFsHb7tmT8=

@@ -363,6 +375,7 @@ github.com/sergi/go-diff v1.1.0 h1:we8PVUC3FE2uYfodKH/nBHMSetSfHDR6scGdBi+erh0=

github.com/shopspring/decimal v0.0.0-20180709203117-cd690d0c9e24/go.mod h1:M+9NzErvs504Cn4c5DxATwIqPbtswREoFCre64PpcG4=

github.com/shopspring/decimal v1.2.0 h1:abSATXmQEYyShuxI4/vyW3tV1MrKAJzCZ/0zLUXYbsQ=

github.com/shopspring/decimal v1.2.0/go.mod h1:DKyhrW/HYNuLGql+MJL6WCR6knT2jwCFRcu2hWCYk4o=

+github.com/shurcooL/sanitized_anchor_name v1.0.0/go.mod h1:1NzhyTcUVG4SuEtjjoZeVRXNmyL/1OwPU0+IJeTBvfc=

github.com/sirupsen/logrus v1.4.1/go.mod h1:ni0Sbl8bgC9z8RoU9G6nDWqqs/fq4eDPysMBDgk/93Q=

github.com/sirupsen/logrus v1.4.2/go.mod h1:tLMulIdttU9McNUspp0xgXVQah82FyeX6MwdIuYE2rE=

github.com/sirupsen/logrus v1.9.0 h1:trlNQbNUG3OdDrDil03MCb1H2o9nJ1x4/5LYw7byDE0=

@@ -497,6 +510,7 @@ golang.org/x/sys v0.0.0-20210806184541-e5e7981a1069/go.mod h1:oPkhp1MJrh7nUepCBc

golang.org/x/sys v0.0.0-20220520151302-bc2c85ada10a/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

golang.org/x/sys v0.0.0-20220715151400-c0bba94af5f8/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

golang.org/x/sys v0.0.0-20220722155257-8c9f86f7a55f/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

+golang.org/x/sys v0.0.0-20220811171246-fbc7d0a398ab/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

golang.org/x/sys v0.0.0-20220908164124-27713097b956/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

golang.org/x/sys v0.5.0/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

golang.org/x/sys v0.6.0/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

@@ -539,6 +553,8 @@ golang.org/x/xerrors v0.0.0-20190717185122-a985d3407aa7/go.mod h1:I/5z698sn9Ka8T

golang.org/x/xerrors v0.0.0-20191011141410-1b5146add898/go.mod h1:I/5z698sn9Ka8TeJc9MKroUUfqBBauWjQqLJ2OPfmY0=

golang.org/x/xerrors v0.0.0-20191204190536-9bdfabe68543/go.mod h1:I/5z698sn9Ka8TeJc9MKroUUfqBBauWjQqLJ2OPfmY0=

golang.org/x/xerrors v0.0.0-20200804184101-5ec99f83aff1/go.mod h1:I/5z698sn9Ka8TeJc9MKroUUfqBBauWjQqLJ2OPfmY0=

+golang.org/x/xerrors v0.0.0-20220907171357-04be3eba64a2 h1:H2TDz8ibqkAF6YGhCdN3jS9O0/s90v0rJh3X/OLHEUk=

+golang.org/x/xerrors v0.0.0-20220907171357-04be3eba64a2/go.mod h1:K8+ghG5WaK9qNqU5K3HdILfMLy1f3aNYFI/wnl100a8=

google.golang.org/protobuf v0.0.0-20200109180630-ec00e32a8dfd/go.mod h1:DFci5gLYBciE7Vtevhsrf46CRTquxDuWsQurQQe4oz8=

google.golang.org/protobuf v0.0.0-20200221191635-4d8936d0db64/go.mod h1:kwYJMbMJ01Woi6D6+Kah6886xMZcty6N08ah7+eCXa0=

google.golang.org/protobuf v0.0.0-20200228230310-ab0ca4ff8a60/go.mod h1:cfTl7dwQJ+fmap5saPgwCLgHXTUD7jkjRqWcaiX5VyM=

diff --git a/pkg/dentry/group.go b/pkg/dentry/group.go

index e67e9712..9da273a4 100644

--- a/pkg/dentry/group.go

+++ b/pkg/dentry/group.go

@@ -24,6 +24,7 @@ import (

"github.com/basenana/nanafs/pkg/plugin/pluginapi"

"github.com/basenana/nanafs/pkg/types"

"github.com/basenana/nanafs/utils/logger"

+ "go.uber.org/zap"

"path"

"runtime/trace"

"strings"

@@ -227,6 +228,7 @@ type extGroup struct {

mgr Manager

stdGroup *stdGroup

mirror plugin.MirrorPlugin

+ logger *zap.SugaredLogger

}

func (e *extGroup) FindEntry(ctx context.Context, name string) (*types.Metadata, error) {

@@ -258,25 +260,23 @@ func (e *extGroup) CreateEntry(ctx context.Context, attr EntryAttr) (*types.Meta

}

func (e *extGroup) UpdateEntry(ctx context.Context, entryId int64, patch *types.Metadata) error {

- group, err := e.stdGroup.cacheStore.getEntry(ctx, e.stdGroup.entryID)

+ // query old and write back

+ entry, err := e.stdGroup.cacheStore.getEntry(ctx, entryId)

if err != nil {

return err

}

- mirrorEn, err := e.mirror.FindEntry(ctx, group.Name)

+

+ mirrorEn, err := e.mirror.FindEntry(ctx, entry.Name)

if err != nil {

+ e.logger.Warnw("find entry in mirror plugin failed", "name", entry.Name, "err", err)

return err

}

mirrorEn.Size = patch.Size

- // query old and write back

- entry, err := e.stdGroup.cacheStore.getEntry(ctx, entryId)

- if err != nil {

- return err

- }

-

err = e.mirror.UpdateEntry(ctx, mirrorEn)

if err != nil {

+ e.logger.Warnw("update entry to mirror plugin failed", "name", entry.Name, "err", err)

return err

}

diff --git a/pkg/dentry/manager.go b/pkg/dentry/manager.go

index 791e7a04..8e6ab781 100644

--- a/pkg/dentry/manager.go

+++ b/pkg/dentry/manager.go

@@ -570,7 +570,8 @@ func (m *manager) OpenGroup(ctx context.Context, groupId int64) (Group, error) {

if err != nil {

return nil, err

}

- grp = &extGroup{mgr: m, stdGroup: stdGrp, mirror: mirror}

+ grp = &extGroup{mgr: m, stdGroup: stdGrp, mirror: mirror,

+ logger: logger.NewLogger("extLogger").With(zap.Int64("group", groupId))}

} else {

grp = emptyGroup{}

}

diff --git a/pkg/dispatch/workflow.go b/pkg/dispatch/workflow.go

index 83b434c7..d488e19f 100644

--- a/pkg/dispatch/workflow.go

+++ b/pkg/dispatch/workflow.go

@@ -75,7 +75,7 @@ func (w workflowAction) handleEvent(ctx context.Context, evt *types.EntryEvent)

}

// trigger workflow

- job, err = w.manager.TriggerWorkflow(ctx, wf.Id, evt.RefID, workflow.JobAttr{Reason: fmt.Sprintf("event: %s", evt.Type)})

+ job, err = w.manager.TriggerWorkflow(ctx, wf.Id, types.WorkflowTarget{EntryID: evt.RefID}, workflow.JobAttr{Reason: fmt.Sprintf("event: %s", evt.Type)})

if err != nil {

w.logger.Errorw("[workflowAction] workflow trigger failed", "entry", evt.RefID, "workflow", wf.Id, "err", err)

continue

diff --git a/pkg/metastore/sql.go b/pkg/metastore/sql.go

index 6b8ca82a..15ac1054 100644

--- a/pkg/metastore/sql.go

+++ b/pkg/metastore/sql.go

@@ -443,6 +443,7 @@ func (s *sqlMetaStore) ListWorkflowJob(ctx context.Context, filter types.JobFilt

func (s *sqlMetaStore) SaveWorkflow(ctx context.Context, wf *types.WorkflowSpec) error {

defer trace.StartRegion(ctx, "metastore.sql.SaveWorkflow").End()

+ wf.UpdatedAt = time.Now()

err := s.dbEntity.SaveWorkflow(ctx, wf)

if err != nil {

return db.SqlError2Error(err)

@@ -452,6 +453,7 @@ func (s *sqlMetaStore) SaveWorkflow(ctx context.Context, wf *types.WorkflowSpec)

func (s *sqlMetaStore) SaveWorkflowJob(ctx context.Context, wf *types.WorkflowJob) error {

defer trace.StartRegion(ctx, "metastore.sql.SaveWorkflowJob").End()

+ wf.UpdatedAt = time.Now()

err := s.dbEntity.SaveWorkflowJob(ctx, wf)

if err != nil {

return db.SqlError2Error(err)

diff --git a/pkg/plugin/buildin/rss.go b/pkg/plugin/buildin/rss.go

index bd7850d4..ac74d366 100644

--- a/pkg/plugin/buildin/rss.go

+++ b/pkg/plugin/buildin/rss.go

@@ -27,7 +27,6 @@ import (

"github.com/hyponet/webpage-packer/packer"

"github.com/mmcdole/gofeed"

"go.uber.org/zap"

- "io/ioutil"

"net/url"

"os"

"path"

@@ -41,6 +40,7 @@ const (

archiveFileTypeUrl = "url"

archiveFileTypeHtml = "html"

+ archiveFileTypeRawHtml = "rawhtml"

archiveFileTypeWebArchive = "webarchive"

)

@@ -82,12 +82,15 @@ func (r *RssSourcePlugin) SourceInfo() (string, error) {

}

func (r *RssSourcePlugin) Run(ctx context.Context, request *pluginapi.Request) (*pluginapi.Response, error) {

+ if request.ParentEntryId <= 0 {

+ return nil, fmt.Errorf("invalid parent entry id: %d", request.ParentEntryId)

+ }

source, err := r.rssSources(r.scope.Parameters)

if err != nil {

r.logger.Errorw("get rss source failed", "err", err)

return nil, err

}

- source.EntryId = request.EntryId

+ source.EntryId = request.ParentEntryId

entries, err := r.syncRssSource(ctx, source, request.WorkPath)

if err != nil {

@@ -95,6 +98,7 @@ func (r *RssSourcePlugin) Run(ctx context.Context, request *pluginapi.Request) (

return pluginapi.NewFailedResponse(fmt.Sprintf("sync rss failed: %s", err)), nil

}

results := []pluginapi.CollectManifest{{BaseEntry: source.EntryId, NewFiles: entries}}

+ r.logger.Infow("sync rss finish", "baseEntry", source.EntryId, "entries", len(entries))

return pluginapi.NewResponseWithResult(map[string]any{pluginapi.ResCollectManifests: results}), nil

}

@@ -114,7 +118,7 @@ func (r *RssSourcePlugin) rssSources(pluginParams map[string]string) (src rssSou

src.FileType = pluginParams["file_type"]

if src.FileType == "" {

- src.FileType = archiveFileTypeUrl

+ src.FileType = archiveFileTypeHtml

}

src.Timeout = 120

@@ -164,12 +168,20 @@ func (r *RssSourcePlugin) syncRssSource(ctx context.Context, source rssSource, w

buf.WriteString("\n")

buf.WriteString(fmt.Sprintf("URL=%s", item.Link))

- err = ioutil.WriteFile(filePath, buf.Bytes(), 0655)

+ err = os.WriteFile(filePath, buf.Bytes(), 0655)

if err != nil {

return nil, fmt.Errorf("pack to url file failed: %s", err)

}

case archiveFileTypeHtml:

+ filePath += ".html"

+ htmlContent := readableHtmlContent(item.Link, item.Title, item.Content)

+ err = os.WriteFile(filePath, []byte(htmlContent), 0655)

+ if err != nil {

+ return nil, fmt.Errorf("pack to html file failed: %s", err)

+ }

+

+ case archiveFileTypeRawHtml:

filePath += ".html"

p := packer.NewHtmlPacker()

err = p.Pack(ctx, packer.Option{

@@ -180,7 +192,7 @@ func (r *RssSourcePlugin) syncRssSource(ctx context.Context, source rssSource, w

Headers: headers,

})

if err != nil {

- return nil, fmt.Errorf("pack to html file failed: %s", err)

+ return nil, fmt.Errorf("pack to raw html file failed: %s", err)

}

case archiveFileTypeWebArchive:

@@ -216,7 +228,7 @@ func (r *RssSourcePlugin) syncRssSource(ctx context.Context, source rssSource, w

IsGroup: false,

})

}

- return nil, nil

+ return newEntries, nil

}

func BuildRssSourcePlugin(ctx context.Context, recorder metastore.PluginRecorder, spec types.PluginSpec, scope types.PlugScope) *RssSourcePlugin {

diff --git a/pkg/plugin/buildin/utils.go b/pkg/plugin/buildin/utils.go

new file mode 100644

index 00000000..d171d23e

--- /dev/null

+++ b/pkg/plugin/buildin/utils.go

@@ -0,0 +1,60 @@

+/*

+ Copyright 2023 NanaFS Authors.

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

+*/

+

+package buildin

+

+import (

+ "net/url"

+ "strings"

+)

+

+func readableHtmlContent(urlStr, title, content string) string {

+ var hostStr string

+ u, err := url.Parse(urlStr)

+ if err == nil {

+ hostStr = u.Host

+ } else {

+ hostStr = urlStr

+ }

+ patched := strings.ReplaceAll(readableHtmlTpl, "{TITLE}", title)

+ patched = strings.ReplaceAll(patched, "{HOST}", hostStr)

+ patched = strings.ReplaceAll(patched, "{URL}", urlStr)

+ patched = strings.ReplaceAll(patched, "{CONTENT}", content)

+

+ return patched

+}

+

+const readableHtmlTpl = `

+

+{TITLE}

+

+

+

+

+

+{CONTENT}

+

+`

diff --git a/pkg/plugin/pluginapi/process.go b/pkg/plugin/pluginapi/process.go

index 70ac2f4b..7e85d9b4 100644

--- a/pkg/plugin/pluginapi/process.go

+++ b/pkg/plugin/pluginapi/process.go

@@ -17,11 +17,12 @@

package pluginapi

type Request struct {

- Action string

- WorkPath string

- EntryId int64

- EntryPath string

- Parameter map[string]any

+ Action string

+ WorkPath string

+ EntryId int64

+ ParentEntryId int64

+ EntryPath string

+ Parameter map[string]any

}

func NewRequest() *Request {

diff --git a/pkg/types/object.go b/pkg/types/object.go

index decd049c..6a05ca0f 100644

--- a/pkg/types/object.go

+++ b/pkg/types/object.go

@@ -97,7 +97,7 @@ const (

type PlugScope struct {

PluginName string `json:"plugin_name"`

Version string `json:"version"`

- PluginType PluginType `json:"plugin_type"`

+ PluginType PluginType `json:"plugin_type,omitempty"`

Action string `json:"action,omitempty"`

Parameters map[string]string `json:"parameters"`

}

diff --git a/pkg/types/workflow.go b/pkg/types/workflow.go

index 678874db..eee89b29 100644

--- a/pkg/types/workflow.go

+++ b/pkg/types/workflow.go

@@ -39,11 +39,11 @@ type WorkflowStepSpec struct {

type WorkflowJob struct {

Id string `json:"id"`

Workflow string `json:"workflow"`

- TriggerReason string `json:"trigger_reason"`

+ TriggerReason string `json:"trigger_reason,omitempty"`

Target WorkflowTarget `json:"target"`

Steps []WorkflowJobStep `json:"steps"`

- Status string `json:"status"`

- Message string `json:"message"`

+ Status string `json:"status,omitempty"`

+ Message string `json:"message,omitempty"`

StartAt time.Time `json:"start_at"`

FinishAt time.Time `json:"finish_at"`

CreatedAt time.Time `json:"created_at"`

@@ -68,13 +68,14 @@ func (w *WorkflowJob) SetMessage(msg string) {

type WorkflowJobStep struct {

StepName string `json:"step_name"`

- Message string `json:"message"`

- Status string `json:"status"`

+ Message string `json:"message,omitempty"`

+ Status string `json:"status,omitempty"`

Plugin *PlugScope `json:"plugin,omitempty"`

}

type WorkflowTarget struct {

- EntryID int64 `json:"entry_id"`

+ EntryID int64 `json:"entry_id,omitempty"`

+ ParentEntryID int64 `json:"parent_entry_id,omitempty"`

}

type WorkflowEntryResult struct {

diff --git a/pkg/workflow/exec/executor.go b/pkg/workflow/exec/executor.go

index 52b5c61f..7d09db78 100644

--- a/pkg/workflow/exec/executor.go

+++ b/pkg/workflow/exec/executor.go

@@ -76,10 +76,12 @@ func (b *localExecutor) Setup(ctx context.Context) (err error) {

return logOperationError(localExecName, "setup", err)

}

- b.entryPath, err = entryWorkdirInit(ctx, b.job.Target.EntryID, b.entryMgr, b.workdir)

- if err != nil {

- b.logger.Errorw("copy target file to workdir failed", "err", err)

- return logOperationError(localExecName, "setup", err)

+ if b.job.Target.EntryID != 0 {

+ b.entryPath, err = entryWorkdirInit(ctx, b.job.Target.EntryID, b.entryMgr, b.workdir)

+ if err != nil {

+ b.logger.Errorw("copy target file to workdir failed", "err", err)

+ return logOperationError(localExecName, "setup", err)

+ }

}

b.logger.Infow("job setup", "workdir", b.workdir, "entryPath", b.entryPath)

@@ -93,6 +95,7 @@ func (b *localExecutor) DoOperation(ctx context.Context, step types.WorkflowJobS

req := pluginapi.NewRequest()

req.WorkPath = b.workdir

req.EntryId = b.job.Target.EntryID

+ req.ParentEntryId = b.job.Target.ParentEntryID

req.EntryPath = b.entryPath

req.Parameter = map[string]any{}

@@ -155,6 +158,7 @@ func (b *localExecutor) Collect(ctx context.Context) error {

return logOperationError(localExecName, "collect", fmt.Errorf(msg))

}

+ b.logger.Infow("collect files", "manifests", len(manifests))

var errList []error

for _, manifest := range manifests {

for _, file := range manifest.NewFiles {

diff --git a/pkg/workflow/jobrun/runner.go b/pkg/workflow/jobrun/runner.go

index 9fa71809..8f560507 100644

--- a/pkg/workflow/jobrun/runner.go

+++ b/pkg/workflow/jobrun/runner.go

@@ -72,6 +72,9 @@ func (r *runner) Start(ctx context.Context) (err error) {

r.logger.Errorf("job initial failed: %s", err)

return err

}

+ if r.job.StartAt.IsZero() {

+ r.job.StartAt = startAt

+ }

runnerStartedCounter.Add(1)

defer func() {

@@ -224,7 +227,7 @@ func (r *runner) handleJobResume(event fsm.Event) error {

func (r *runner) handleJobSucceed(event fsm.Event) error {

r.logger.Info("job succeed")

-

+ r.job.FinishAt = time.Now()

if err := r.recorder.SaveWorkflowJob(r.ctx, r.job); err != nil {

r.logger.Errorf("save job status failed: %s", err)

return err

@@ -235,7 +238,7 @@ func (r *runner) handleJobSucceed(event fsm.Event) error {

func (r *runner) handleJobFailed(event fsm.Event) error {

r.logger.Info("job failed")

-

+ r.job.FinishAt = time.Now()

if err := r.recorder.SaveWorkflowJob(r.ctx, r.job); err != nil {

r.logger.Errorf("save job status failed: %s", err)

return err

@@ -246,6 +249,7 @@ func (r *runner) handleJobFailed(event fsm.Event) error {

func (r *runner) handleJobCancel(event fsm.Event) error {

r.logger.Info("job cancel")

+ r.job.FinishAt = time.Now()

if err := r.recorder.SaveWorkflowJob(r.ctx, r.job); err != nil {

r.logger.Errorf("save job status failed: %s", err)

return err

@@ -266,7 +270,7 @@ func (r *runner) jobBatchRun() (finish bool, err error) {

}

if len(batch) == 0 {

- r.logger.Info("got empty batch, close finished job")

+ r.logger.Info("all batch finished, close job")

return true, nil

}

diff --git a/pkg/workflow/manager.go b/pkg/workflow/manager.go

index 3bf226b2..4a441bd3 100644

--- a/pkg/workflow/manager.go

+++ b/pkg/workflow/manager.go

@@ -40,8 +40,9 @@ type Manager interface {

UpdateWorkflow(ctx context.Context, spec *types.WorkflowSpec) (*types.WorkflowSpec, error)

DeleteWorkflow(ctx context.Context, wfId string) error

ListJobs(ctx context.Context, wfId string) ([]*types.WorkflowJob, error)

+ GetJob(ctx context.Context, wfId string, jobID string) (*types.WorkflowJob, error)

- TriggerWorkflow(ctx context.Context, wfId string, entryID int64, attr JobAttr) (*types.WorkflowJob, error)

+ TriggerWorkflow(ctx context.Context, wfId string, tgt types.WorkflowTarget, attr JobAttr) (*types.WorkflowJob, error)

PauseWorkflowJob(ctx context.Context, jobId string) error

ResumeWorkflowJob(ctx context.Context, jobId string) error

CancelWorkflowJob(ctx context.Context, jobId string) error

@@ -152,6 +153,14 @@ func (m *manager) DeleteWorkflow(ctx context.Context, wfId string) error {

return m.recorder.DeleteWorkflow(ctx, wfId)

}

+func (m *manager) GetJob(ctx context.Context, wfId string, jobID string) (*types.WorkflowJob, error) {

+ result, err := m.recorder.GetWorkflowJob(ctx, jobID)

+ if err != nil {

+ return nil, err

+ }

+ return result, nil

+}

+

func (m *manager) ListJobs(ctx context.Context, wfId string) ([]*types.WorkflowJob, error) {

result, err := m.recorder.ListWorkflowJob(ctx, types.JobFilter{WorkFlowID: wfId})

if err != nil {

@@ -160,24 +169,24 @@ func (m *manager) ListJobs(ctx context.Context, wfId string) ([]*types.WorkflowJ

return result, nil

}

-func (m *manager) TriggerWorkflow(ctx context.Context, wfId string, entryID int64, attr JobAttr) (*types.WorkflowJob, error) {

+func (m *manager) TriggerWorkflow(ctx context.Context, wfId string, tgt types.WorkflowTarget, attr JobAttr) (*types.WorkflowJob, error) {

workflow, err := m.GetWorkflow(ctx, wfId)

if err != nil {

return nil, err

}

- if entryID == 0 {

- return nil, fmt.Errorf("no entry martch")

- }

- m.logger.Infow("receive workflow", "workflow", workflow.Name, "entryID", entryID)

- var en *types.Metadata

- en, err = m.entryMgr.GetEntry(ctx, entryID)

- if err != nil {

- m.logger.Errorw("query entry failed", "workflow", workflow.Name, "entryID", entryID, "err", err)

- return nil, err

+ m.logger.Infow("receive workflow", "workflow", workflow.Name, "entryID", tgt)

+ if tgt.EntryID != 0 {

+ var en *types.Metadata

+ en, err = m.entryMgr.GetEntry(ctx, tgt.EntryID)

+ if err != nil {

+ m.logger.Errorw("query entry failed", "workflow", workflow.Name, "entryID", tgt, "err", err)

+ return nil, err

+ }

+ tgt.ParentEntryID = en.ParentID

}

- job, err := assembleWorkflowJob(workflow, en)

+ job, err := assembleWorkflowJob(workflow, tgt)

if err != nil {

m.logger.Errorw("assemble job failed", "workflow", workflow.Name, "err", err)

return nil, err

@@ -265,11 +274,15 @@ func (d disabledManager) DeleteWorkflow(ctx context.Context, wfId string) error

return types.ErrNotFound

}

+func (d disabledManager) GetJob(ctx context.Context, wfId string, jobID string) (*types.WorkflowJob, error) {

+ return nil, types.ErrNotFound

+}

+

func (d disabledManager) ListJobs(ctx context.Context, wfId string) ([]*types.WorkflowJob, error) {

return nil, types.ErrNotFound

}

-func (d disabledManager) TriggerWorkflow(ctx context.Context, wfId string, entryID int64, attr JobAttr) (*types.WorkflowJob, error) {

+func (d disabledManager) TriggerWorkflow(ctx context.Context, wfId string, tgt types.WorkflowTarget, attr JobAttr) (*types.WorkflowJob, error) {

return nil, types.ErrNotFound

}

diff --git a/pkg/workflow/manager_test.go b/pkg/workflow/manager_test.go

index b2a55e90..968d49a4 100644

--- a/pkg/workflow/manager_test.go

+++ b/pkg/workflow/manager_test.go

@@ -139,7 +139,7 @@ var _ = Describe("TestWorkflowJobManage", func() {

var job *types.WorkflowJob

It("trigger workflow should be succeed", func() {

var err error

- job, err = mgr.TriggerWorkflow(ctx, wf.Id, en.ID, JobAttr{})

+ job, err = mgr.TriggerWorkflow(ctx, wf.Id, types.WorkflowTarget{EntryID: en.ID}, JobAttr{})

Expect(err).Should(BeNil())

Expect(job.Id).ShouldNot(BeEmpty())

})

@@ -163,7 +163,7 @@ var _ = Describe("TestWorkflowJobManage", func() {

var job *types.WorkflowJob

It("trigger workflow should be succeed", func() {

var err error

- job, err = mgr.TriggerWorkflow(ctx, wf.Id, en.ID, JobAttr{})

+ job, err = mgr.TriggerWorkflow(ctx, wf.Id, types.WorkflowTarget{EntryID: en.ID}, JobAttr{})

Expect(err).Should(BeNil())

Expect(job.Id).ShouldNot(BeEmpty())

@@ -229,7 +229,7 @@ var _ = Describe("TestWorkflowJobManage", func() {

var job *types.WorkflowJob

It("trigger workflow should be succeed", func() {

var err error

- job, err = mgr.TriggerWorkflow(ctx, wf.Id, en.ID, JobAttr{})

+ job, err = mgr.TriggerWorkflow(ctx, wf.Id, types.WorkflowTarget{EntryID: en.ID}, JobAttr{})

Expect(err).Should(BeNil())

Expect(job.Id).ShouldNot(BeEmpty())

diff --git a/pkg/workflow/mirrordir.go b/pkg/workflow/mirrordir.go

index 0a93a560..d5258406 100644

--- a/pkg/workflow/mirrordir.go

+++ b/pkg/workflow/mirrordir.go

@@ -25,7 +25,7 @@ import (

"github.com/basenana/nanafs/pkg/types"

"github.com/basenana/nanafs/pkg/workflow/jobrun"

"github.com/basenana/nanafs/utils"

- "gopkg.in/yaml.v3"

+ "github.com/goccy/go-yaml"

"path"

"strings"

"time"

@@ -49,17 +49,17 @@ var mirrorPlugin = types.PluginSpec{

}

/*

- MirrorPlugin is an implementation of plugin.MirrorPlugin,

- which supports managing workflows using POSIX operations.

+MirrorPlugin is an implementation of plugin.MirrorPlugin,

+which supports managing workflows using POSIX operations.

- virtual directory structure as follows:

- .

- |--workflows

- |--.yaml

- |--jobs

- |--

- |--.yaml

+virtual directory structure as follows:

+ .

+ |--workflows

+ |--.yaml

+ |--jobs

+ |--

+ |--.yaml

*/

type MirrorPlugin struct {

path string

@@ -92,8 +92,9 @@ func (m *MirrorPlugin) build(ctx context.Context, _ types.PluginSpec, scope type

return nil, err

}

- dirKind, wfID, err := parseFilePath(enPath)

+ dirKind, wfID, jobID, err := parseFilePath(enPath)

if err != nil {

+ wfLogger.Errorw("parse file path failed", "path", enPath, "err", err)

return nil, fmt.Errorf("unexcpect dir path %s", dirKind)

}

@@ -101,7 +102,7 @@ func (m *MirrorPlugin) build(ctx context.Context, _ types.PluginSpec, scope type

if en.IsGroup {

mp.dirHandler = &dirHandler{plugin: mp, dirKind: dirKind, wfID: wfID}

} else {

- mp.fileHandler = &fileHandler{plugin: mp, dirKind: dirKind, wfID: wfID}

+ mp.fileHandler = &fileHandler{plugin: mp, dirKind: dirKind, wfID: wfID, jobID: jobID}

}

return mp, nil

@@ -145,11 +146,11 @@ func (d *dirHandler) FindEntry(ctx context.Context, name string) (*pluginapi.Ent

}

if d.dirKind == MirrorDirWorkflows {

- _, err = d.plugin.mgr.GetWorkflow(ctx, mirrorFile2ID(name))

+ wf, err := d.plugin.mgr.GetWorkflow(ctx, mirrorFile2ID(name))

if err != nil {

return nil, err

}

- return d.plugin.fs.CreateEntry(d.plugin.path, pluginapi.EntryAttr{Name: name, Kind: types.RawKind})

+ return d.reloadWorkflowEntry(wf)

}

if d.dirKind == MirrorDirJobs {

@@ -271,7 +272,7 @@ func (d *dirHandler) ListChildren(ctx context.Context) ([]*pluginapi.Entry, erro

}

for _, wf := range wfList {

if _, ok := cachedChildMap[id2MirrorFile(wf.Id)]; !ok {

- child, err := d.plugin.fs.CreateEntry(d.plugin.path, pluginapi.EntryAttr{Name: id2MirrorFile(wf.Id), Kind: types.RawKind})

+ child, err := d.reloadWorkflowEntry(wf)

if err != nil {

wfLogger.Errorf("init mirror workflow file %s error: %s", id2MirrorFile(wf.Id), err)

return nil, err

@@ -301,7 +302,7 @@ func (d *dirHandler) ListChildren(ctx context.Context) ([]*pluginapi.Entry, erro

}

for _, j := range jobList {

if _, ok := cachedChildMap[id2MirrorFile(j.Id)]; !ok {

- child, err := d.plugin.fs.CreateEntry(d.plugin.path, pluginapi.EntryAttr{Name: id2MirrorFile(j.Id), Kind: types.RawKind})

+ child, err := d.reloadWorkflowJobEntry(j)

if err != nil {

wfLogger.Errorf("init mirror job file %s error: %s", id2MirrorFile(j.Id), err)

return nil, err

@@ -314,10 +315,45 @@ func (d *dirHandler) ListChildren(ctx context.Context) ([]*pluginapi.Entry, erro

return children, nil

}

+func (d *dirHandler) reloadWorkflowEntry(wf *types.WorkflowSpec) (*pluginapi.Entry, error) {

+ en, err := d.plugin.fs.CreateEntry(d.plugin.path, pluginapi.EntryAttr{Name: id2MirrorFile(wf.Id), Kind: types.RawKind})

+ if err != nil {

+ return nil, err

+ }

+ fPath := path.Join(d.plugin.path, en.Name)

+ err = d.plugin.fs.Trunc(fPath)

+ if err != nil {

+ return nil, err

+ }

+ err = yaml.NewEncoder(&memfsFile{filePath: fPath, entry: en, memfs: d.plugin.fs}).Encode(wf)

+ if err != nil {

+ return nil, err

+ }

+ return en, nil

+}

+

+func (d *dirHandler) reloadWorkflowJobEntry(job *types.WorkflowJob) (*pluginapi.Entry, error) {

+ en, err := d.plugin.fs.CreateEntry(d.plugin.path, pluginapi.EntryAttr{Name: id2MirrorFile(job.Id), Kind: types.RawKind})

+ if err != nil {

+ return nil, err

+ }

+ fPath := path.Join(d.plugin.path, en.Name)

+ err = d.plugin.fs.Trunc(fPath)

+ if err != nil {

+ return nil, err

+ }

+ err = yaml.NewEncoder(&memfsFile{filePath: fPath, entry: en, memfs: d.plugin.fs}).Encode(job)

+ if err != nil {

+ return nil, err

+ }

+ return en, nil

+}

+

type fileHandler struct {

- plugin *MirrorPlugin

- dirKind, wfID string

- err error

+ plugin *MirrorPlugin

+ dirKind string

+ wfID, jobID string

+ err error

}

func (f *fileHandler) WriteAt(ctx context.Context, data []byte, off int64) (int64, error) {

@@ -346,54 +382,59 @@ func (f *fileHandler) Close(ctx context.Context) error {

return err

}

- if strings.HasPrefix(en.Name, ".") {

+ if strings.HasPrefix(en.Name, ".") || path.Ext(en.Name) != MirrorFileType {

return nil

}

- op := "unknown"

+ var (

+ op = "unknown"

+ rawObj interface{}

+ )

switch {

case f.dirKind == MirrorDirWorkflows:

op = "create or update workflow"

- f.err = f.createOrUpdateWorkflow(ctx, en)

+ rawObj, f.err = f.createOrUpdateWorkflow(ctx, en)

case f.dirKind == MirrorDirJobs && f.wfID != "":

op = "update workflow job"

- f.err = f.triggerOrUpdateWorkflowJob(ctx, en)

+ rawObj, f.err = f.triggerOrUpdateWorkflowJob(ctx, en)

}

+ _ = f.plugin.fs.Trunc(f.plugin.path)

+ writer := &memfsFile{filePath: f.plugin.path, entry: en, memfs: f.plugin.fs}

if f.err != nil {

wfLogger.Errorf("%s failed: %s", op, f.err)

- _, _ = f.plugin.fs.WriteAt(f.plugin.path, []byte(fmt.Sprintf("\n# error: %s\n", f.err)), en.Size)

+ _, _ = writer.Write([]byte(fmt.Sprintf("# error: %s\n", f.err)))

}

- return nil

+ return yaml.NewEncoder(writer).Encode(rawObj)

}

-func (f *fileHandler) createOrUpdateWorkflow(ctx context.Context, en *pluginapi.Entry) error {

+func (f *fileHandler) createOrUpdateWorkflow(ctx context.Context, en *pluginapi.Entry) (interface{}, error) {

wf := &types.WorkflowSpec{}

decodeErr := yaml.NewDecoder(&memfsFile{filePath: f.plugin.path, entry: en, memfs: f.plugin.fs}).Decode(wf)

if decodeErr != nil {

- return decodeErr

+ wfLogger.Warnw("decode workflow file failed", "path", f.plugin.path, "en", en.Name, "err", decodeErr)

+ return nil, decodeErr

}

wfID := mirrorFile2ID(en.Name)

if err := isValidID(wfID); err != nil {

- return err

+ return nil, err

}

wf.Id = wfID

oldWf, err := f.plugin.mgr.GetWorkflow(ctx, wfID)

if err != nil && err != types.ErrNotFound {

- return err

+ return nil, err

}

// do create

if err == types.ErrNotFound {

wf, err = f.plugin.mgr.CreateWorkflow(ctx, initWorkflow(wf))

if err != nil {

- return err

+ return nil, err

}

- _ = f.plugin.fs.Trunc(f.plugin.path)

- return yaml.NewEncoder(&memfsFile{filePath: f.plugin.path, entry: en, memfs: f.plugin.fs}).Encode(wf)

+ return wf, nil

}

// do update

@@ -406,35 +447,33 @@ func (f *fileHandler) createOrUpdateWorkflow(ctx context.Context, en *pluginapi.

oldWf, err = f.plugin.mgr.UpdateWorkflow(ctx, oldWf)

if err != nil {

- return err

+ return nil, err

}

- _ = f.plugin.fs.Trunc(f.plugin.path)

- _ = yaml.NewEncoder(&memfsFile{filePath: f.plugin.path, entry: en, memfs: f.plugin.fs}).Encode(oldWf)

- return nil

+ return oldWf, nil

}

-func (f *fileHandler) triggerOrUpdateWorkflowJob(ctx context.Context, en *pluginapi.Entry) error {

+func (f *fileHandler) triggerOrUpdateWorkflowJob(ctx context.Context, en *pluginapi.Entry) (interface{}, error) {

wfJob := &types.WorkflowJob{}

- decodeErr := yaml.NewDecoder(&memfsFile{filePath: f.plugin.path, entry: en, memfs: f.plugin.fs}).Decode(wfJob)

- if decodeErr != nil {

- return decodeErr

+ en, err := f.plugin.fs.GetEntry(f.plugin.path)

+ if err != nil {

+ return nil, err

+ }

+ if en.Size > 0 {

+ decodeErr := yaml.NewDecoder(&memfsFile{filePath: f.plugin.path, entry: en, memfs: f.plugin.fs}).Decode(wfJob)

+ if decodeErr != nil {

+ wfLogger.Warnw("decode job file failed", "path", f.plugin.path, "en", en.Name, "err", decodeErr)

+ return nil, decodeErr

+ }

}

jobID := mirrorFile2ID(en.Name)

if err := isValidID(jobID); err != nil {

- return err

- }

-

- jobs, err := f.plugin.mgr.ListJobs(ctx, f.wfID)

- if err != nil {

- return err

+ return nil, err

}

- var oldJob *types.WorkflowJob

- for i, j := range jobs {

- if j.Id == jobID {

- oldJob = jobs[i]

- }

+ oldJob, err := f.plugin.mgr.GetJob(ctx, f.wfID, jobID)

+ if err != nil && err != types.ErrNotFound {

+ return nil, err

}

// do update

@@ -451,20 +490,16 @@ func (f *fileHandler) triggerOrUpdateWorkflowJob(ctx context.Context, en *plugin

err = fmt.Errorf("the current state is %s and cannot be changed to %s", oldJob.Status, wfJob.Status)

}

}

- return err

+ return oldJob, err

}

// do create

target := wfJob.Target

- wfJob, err = f.plugin.mgr.TriggerWorkflow(ctx, f.wfID, target.EntryID, JobAttr{JobID: jobID})

+ wfJob, err = f.plugin.mgr.TriggerWorkflow(ctx, f.wfID, target, JobAttr{JobID: jobID})

if err != nil {

- return err

- }

- encodeErr := yaml.NewEncoder(&memfsFile{filePath: f.plugin.path, entry: en, memfs: f.plugin.fs}).Encode(wfJob)

- if encodeErr != nil {

- return encodeErr

+ return nil, err

}

- return nil

+ return wfJob, nil

}

type memfsFile struct {

@@ -543,7 +578,7 @@ func id2MirrorFile(idStr string) string {

return idStr + MirrorFileType

}

-func parseFilePath(enPath string) (dirKind, wfID string, err error) {

+func parseFilePath(enPath string) (dirKind, wfID, jobID string, err error) {

if enPath == "/" {

dirKind = MirrorDirRoot

return

@@ -552,13 +587,22 @@ func parseFilePath(enPath string) (dirKind, wfID string, err error) {

pathParts := strings.SplitN(enPath, utils.PathSeparator, 3)

dirKind = pathParts[0]

- if dirKind != MirrorDirJobs && dirKind != MirrorDirWorkflows {

+ switch dirKind {

+ case MirrorDirJobs:

+ // path: /jobs//.yaml

+ if len(pathParts) == 3 {

+ jobID = mirrorFile2ID(pathParts[2])

+ }

+ fallthrough

+ case MirrorDirWorkflows:

+ // path: /workflows/.yaml

+ if len(pathParts) > 1 {

+ wfID = mirrorFile2ID(pathParts[1])

+ }

+ default:

err = fmt.Errorf("unknown dir %s", dirKind)

return

}

- if len(pathParts) > 1 {

- wfID = mirrorFile2ID(pathParts[1])

- }

return

}

diff --git a/pkg/workflow/mirrordir_test.go b/pkg/workflow/mirrordir_test.go

index 02766ada..5c5a6a73 100644

--- a/pkg/workflow/mirrordir_test.go

+++ b/pkg/workflow/mirrordir_test.go

@@ -23,9 +23,9 @@ import (

"github.com/basenana/nanafs/pkg/types"

"github.com/basenana/nanafs/pkg/workflow/jobrun"

"github.com/basenana/nanafs/utils"

+ "github.com/goccy/go-yaml"

. "github.com/onsi/ginkgo"

. "github.com/onsi/gomega"

- "gopkg.in/yaml.v3"

"io"

"time"

)

diff --git a/pkg/workflow/utils.go b/pkg/workflow/utils.go

index 53c6618c..1e83df9f 100644

--- a/pkg/workflow/utils.go

+++ b/pkg/workflow/utils.go

@@ -35,12 +35,12 @@ var (

wfLogger *zap.SugaredLogger

)

-func assembleWorkflowJob(spec *types.WorkflowSpec, entry *types.Metadata) (*types.WorkflowJob, error) {

+func assembleWorkflowJob(spec *types.WorkflowSpec, tgt types.WorkflowTarget) (*types.WorkflowJob, error) {

var globalParam = map[string]string{}

j := &types.WorkflowJob{

Id: uuid.New().String(),

Workflow: spec.Id,

- Target: types.WorkflowTarget{EntryID: entry.ID},

+ Target: tgt,

Status: jobrun.InitializingStatus,

CreatedAt: time.Now(),

UpdatedAt: time.Now(),

diff --git a/vendor/github.com/fatih/color/LICENSE.md b/vendor/github.com/fatih/color/LICENSE.md

new file mode 100644

index 00000000..25fdaf63

--- /dev/null

+++ b/vendor/github.com/fatih/color/LICENSE.md

@@ -0,0 +1,20 @@

+The MIT License (MIT)

+

+Copyright (c) 2013 Fatih Arslan

+

+Permission is hereby granted, free of charge, to any person obtaining a copy of

+this software and associated documentation files (the "Software"), to deal in

+the Software without restriction, including without limitation the rights to

+use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of

+the Software, and to permit persons to whom the Software is furnished to do so,

+subject to the following conditions:

+

+The above copyright notice and this permission notice shall be included in all

+copies or substantial portions of the Software.

+

+THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

+IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS

+FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR

+COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER

+IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN

+CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

diff --git a/vendor/github.com/fatih/color/README.md b/vendor/github.com/fatih/color/README.md

new file mode 100644

index 00000000..be82827c

--- /dev/null

+++ b/vendor/github.com/fatih/color/README.md

@@ -0,0 +1,176 @@

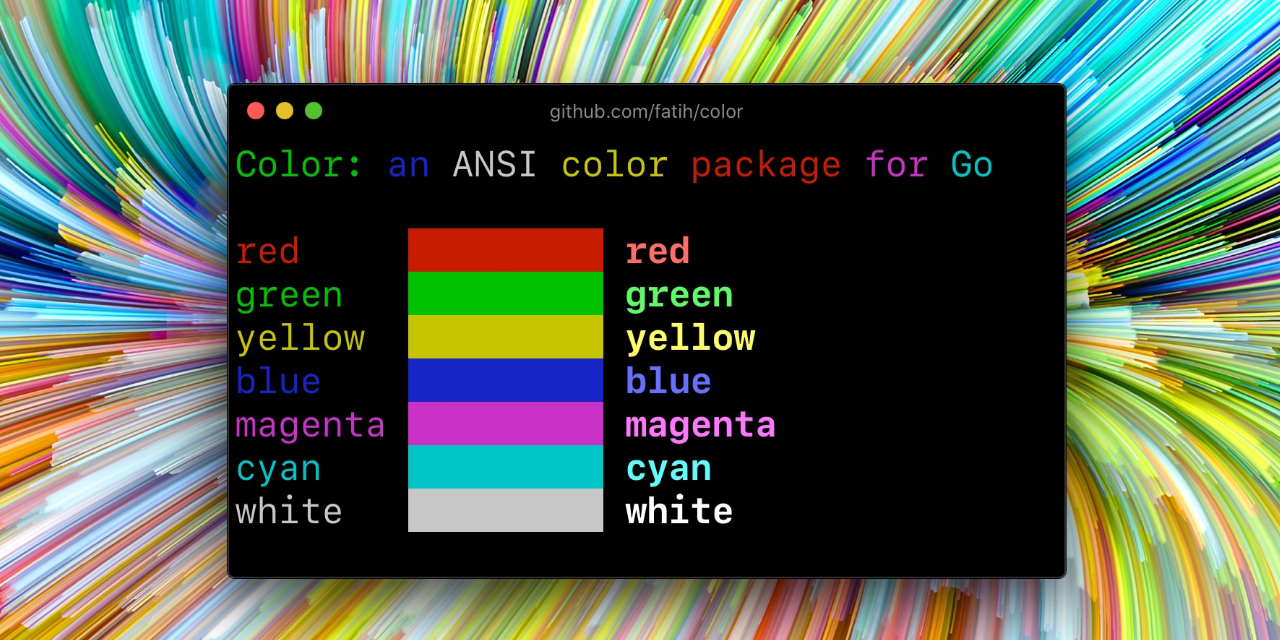

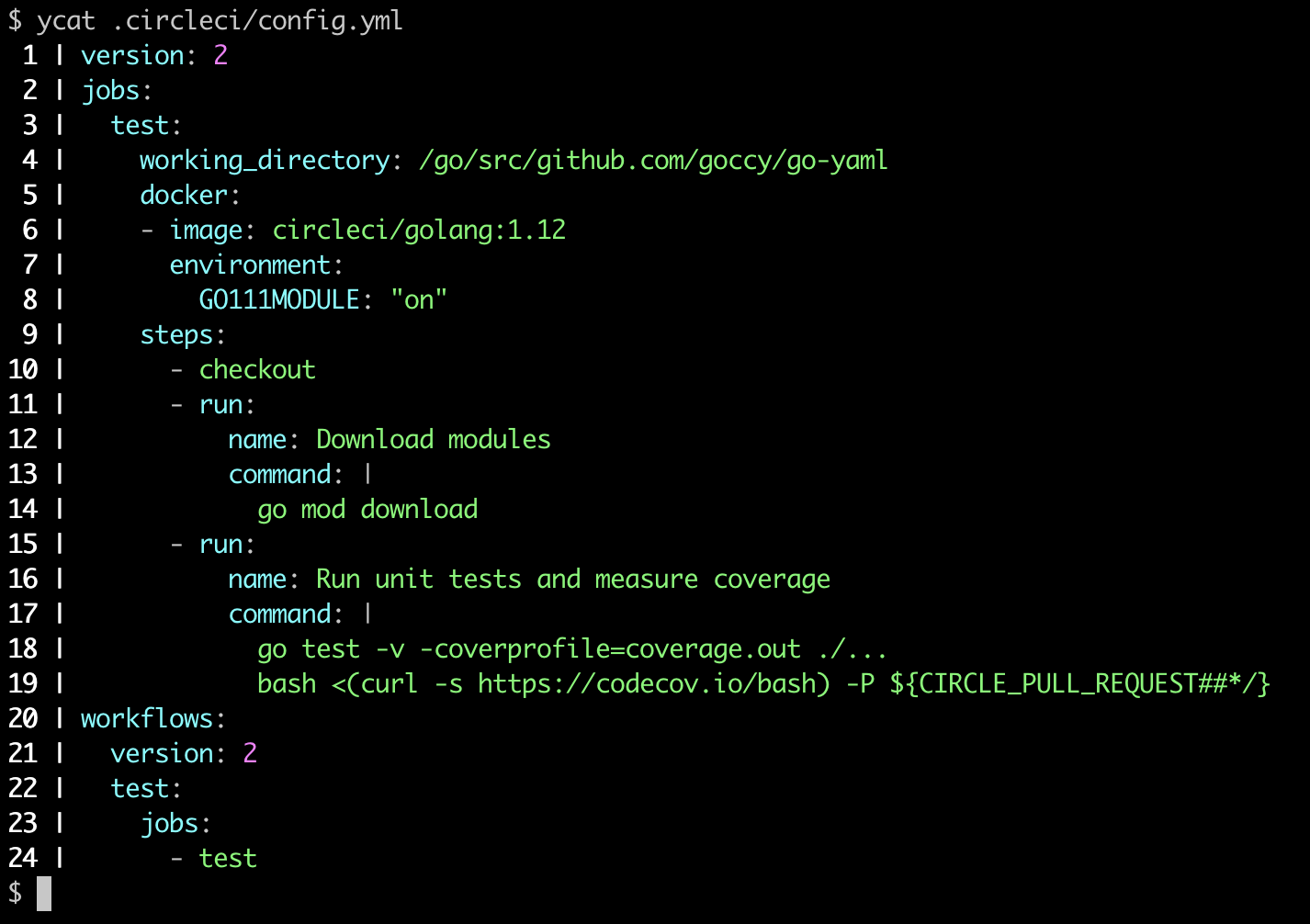

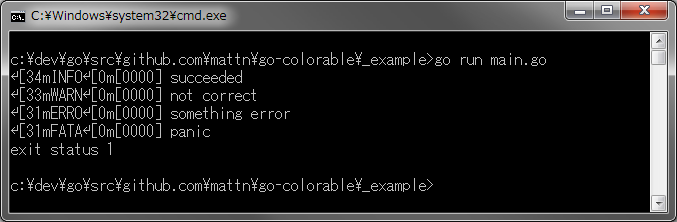

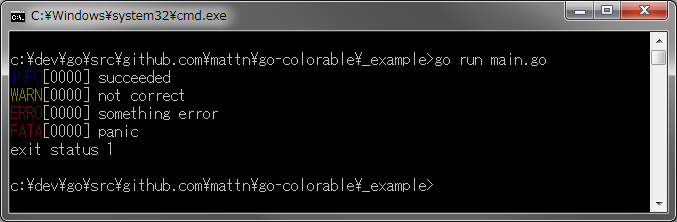

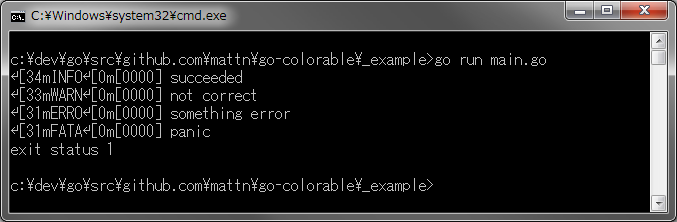

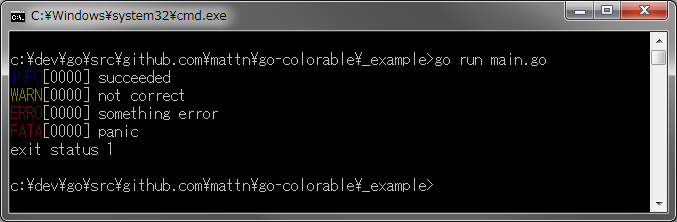

+# color [](https://github.com/fatih/color/actions) [](https://pkg.go.dev/github.com/fatih/color)

+

+Color lets you use colorized outputs in terms of [ANSI Escape

+Codes](http://en.wikipedia.org/wiki/ANSI_escape_code#Colors) in Go (Golang). It

+has support for Windows too! The API can be used in several ways, pick one that

+suits you.

+

+

+

+## Install

+

+```bash

+go get github.com/fatih/color

+```

+

+## Examples

+

+### Standard colors

+

+```go

+// Print with default helper functions

+color.Cyan("Prints text in cyan.")

+

+// A newline will be appended automatically

+color.Blue("Prints %s in blue.", "text")

+

+// These are using the default foreground colors

+color.Red("We have red")

+color.Magenta("And many others ..")

+

+```

+

+### Mix and reuse colors

+

+```go

+// Create a new color object

+c := color.New(color.FgCyan).Add(color.Underline)

+c.Println("Prints cyan text with an underline.")

+

+// Or just add them to New()

+d := color.New(color.FgCyan, color.Bold)

+d.Printf("This prints bold cyan %s\n", "too!.")

+

+// Mix up foreground and background colors, create new mixes!

+red := color.New(color.FgRed)

+

+boldRed := red.Add(color.Bold)

+boldRed.Println("This will print text in bold red.")

+

+whiteBackground := red.Add(color.BgWhite)

+whiteBackground.Println("Red text with white background.")

+```

+

+### Use your own output (io.Writer)

+

+```go

+// Use your own io.Writer output

+color.New(color.FgBlue).Fprintln(myWriter, "blue color!")

+

+blue := color.New(color.FgBlue)

+blue.Fprint(writer, "This will print text in blue.")

+```

+

+### Custom print functions (PrintFunc)

+

+```go

+// Create a custom print function for convenience

+red := color.New(color.FgRed).PrintfFunc()

+red("Warning")

+red("Error: %s", err)

+

+// Mix up multiple attributes

+notice := color.New(color.Bold, color.FgGreen).PrintlnFunc()

+notice("Don't forget this...")

+```

+

+### Custom fprint functions (FprintFunc)

+

+```go

+blue := color.New(color.FgBlue).FprintfFunc()

+blue(myWriter, "important notice: %s", stars)

+

+// Mix up with multiple attributes

+success := color.New(color.Bold, color.FgGreen).FprintlnFunc()

+success(myWriter, "Don't forget this...")

+```

+

+### Insert into noncolor strings (SprintFunc)

+

+```go

+// Create SprintXxx functions to mix strings with other non-colorized strings:

+yellow := color.New(color.FgYellow).SprintFunc()

+red := color.New(color.FgRed).SprintFunc()

+fmt.Printf("This is a %s and this is %s.\n", yellow("warning"), red("error"))

+

+info := color.New(color.FgWhite, color.BgGreen).SprintFunc()

+fmt.Printf("This %s rocks!\n", info("package"))

+

+// Use helper functions

+fmt.Println("This", color.RedString("warning"), "should be not neglected.")

+fmt.Printf("%v %v\n", color.GreenString("Info:"), "an important message.")

+

+// Windows supported too! Just don't forget to change the output to color.Output

+fmt.Fprintf(color.Output, "Windows support: %s", color.GreenString("PASS"))

+```

+

+### Plug into existing code

+

+```go

+// Use handy standard colors

+color.Set(color.FgYellow)

+

+fmt.Println("Existing text will now be in yellow")

+fmt.Printf("This one %s\n", "too")

+

+color.Unset() // Don't forget to unset

+

+// You can mix up parameters

+color.Set(color.FgMagenta, color.Bold)

+defer color.Unset() // Use it in your function

+

+fmt.Println("All text will now be bold magenta.")

+```

+

+### Disable/Enable color

+

+There might be a case where you want to explicitly disable/enable color output. the

+`go-isatty` package will automatically disable color output for non-tty output streams

+(for example if the output were piped directly to `less`).

+

+The `color` package also disables color output if the [`NO_COLOR`](https://no-color.org) environment

+variable is set to a non-empty string.

+

+`Color` has support to disable/enable colors programmatically both globally and

+for single color definitions. For example suppose you have a CLI app and a

+`-no-color` bool flag. You can easily disable the color output with:

+

+```go

+var flagNoColor = flag.Bool("no-color", false, "Disable color output")

+

+if *flagNoColor {

+ color.NoColor = true // disables colorized output

+}

+```

+

+It also has support for single color definitions (local). You can

+disable/enable color output on the fly:

+

+```go

+c := color.New(color.FgCyan)

+c.Println("Prints cyan text")

+

+c.DisableColor()

+c.Println("This is printed without any color")

+

+c.EnableColor()

+c.Println("This prints again cyan...")

+```

+

+## GitHub Actions

+

+To output color in GitHub Actions (or other CI systems that support ANSI colors), make sure to set `color.NoColor = false` so that it bypasses the check for non-tty output streams.

+

+## Todo

+

+* Save/Return previous values

+* Evaluate fmt.Formatter interface

+

+## Credits

+

+* [Fatih Arslan](https://github.com/fatih)

+* Windows support via @mattn: [colorable](https://github.com/mattn/go-colorable)

+

+## License

+

+The MIT License (MIT) - see [`LICENSE.md`](https://github.com/fatih/color/blob/master/LICENSE.md) for more details

diff --git a/vendor/github.com/fatih/color/color.go b/vendor/github.com/fatih/color/color.go

new file mode 100644

index 00000000..889f9e77

--- /dev/null

+++ b/vendor/github.com/fatih/color/color.go

@@ -0,0 +1,616 @@

+package color

+

+import (

+ "fmt"

+ "io"

+ "os"

+ "strconv"

+ "strings"

+ "sync"

+

+ "github.com/mattn/go-colorable"

+ "github.com/mattn/go-isatty"

+)

+

+var (

+ // NoColor defines if the output is colorized or not. It's dynamically set to

+ // false or true based on the stdout's file descriptor referring to a terminal

+ // or not. It's also set to true if the NO_COLOR environment variable is

+ // set (regardless of its value). This is a global option and affects all

+ // colors. For more control over each color block use the methods

+ // DisableColor() individually.

+ NoColor = noColorIsSet() || os.Getenv("TERM") == "dumb" ||

+ (!isatty.IsTerminal(os.Stdout.Fd()) && !isatty.IsCygwinTerminal(os.Stdout.Fd()))

+

+ // Output defines the standard output of the print functions. By default,

+ // os.Stdout is used.

+ Output = colorable.NewColorableStdout()

+

+ // Error defines a color supporting writer for os.Stderr.

+ Error = colorable.NewColorableStderr()

+

+ // colorsCache is used to reduce the count of created Color objects and

+ // allows to reuse already created objects with required Attribute.

+ colorsCache = make(map[Attribute]*Color)

+ colorsCacheMu sync.Mutex // protects colorsCache

+)

+

+// noColorIsSet returns true if the environment variable NO_COLOR is set to a non-empty string.

+func noColorIsSet() bool {

+ return os.Getenv("NO_COLOR") != ""

+}

+

+// Color defines a custom color object which is defined by SGR parameters.

+type Color struct {

+ params []Attribute

+ noColor *bool

+}

+

+// Attribute defines a single SGR Code

+type Attribute int

+

+const escape = "\x1b"

+

+// Base attributes

+const (

+ Reset Attribute = iota

+ Bold

+ Faint

+ Italic

+ Underline

+ BlinkSlow

+ BlinkRapid

+ ReverseVideo

+ Concealed

+ CrossedOut

+)

+

+// Foreground text colors

+const (

+ FgBlack Attribute = iota + 30

+ FgRed

+ FgGreen

+ FgYellow

+ FgBlue

+ FgMagenta

+ FgCyan

+ FgWhite

+)

+

+// Foreground Hi-Intensity text colors

+const (

+ FgHiBlack Attribute = iota + 90

+ FgHiRed

+ FgHiGreen

+ FgHiYellow

+ FgHiBlue

+ FgHiMagenta

+ FgHiCyan

+ FgHiWhite

+)

+

+// Background text colors

+const (

+ BgBlack Attribute = iota + 40

+ BgRed

+ BgGreen

+ BgYellow

+ BgBlue

+ BgMagenta

+ BgCyan

+ BgWhite

+)

+

+// Background Hi-Intensity text colors

+const (

+ BgHiBlack Attribute = iota + 100

+ BgHiRed

+ BgHiGreen

+ BgHiYellow

+ BgHiBlue

+ BgHiMagenta

+ BgHiCyan

+ BgHiWhite

+)

+

+// New returns a newly created color object.

+func New(value ...Attribute) *Color {

+ c := &Color{

+ params: make([]Attribute, 0),

+ }

+

+ if noColorIsSet() {

+ c.noColor = boolPtr(true)

+ }

+

+ c.Add(value...)

+ return c

+}

+

+// Set sets the given parameters immediately. It will change the color of

+// output with the given SGR parameters until color.Unset() is called.

+func Set(p ...Attribute) *Color {

+ c := New(p...)

+ c.Set()

+ return c

+}

+

+// Unset resets all escape attributes and clears the output. Usually should

+// be called after Set().

+func Unset() {

+ if NoColor {

+ return

+ }

+

+ fmt.Fprintf(Output, "%s[%dm", escape, Reset)

+}

+

+// Set sets the SGR sequence.

+func (c *Color) Set() *Color {

+ if c.isNoColorSet() {

+ return c

+ }

+

+ fmt.Fprint(Output, c.format())

+ return c

+}

+

+func (c *Color) unset() {

+ if c.isNoColorSet() {

+ return

+ }

+

+ Unset()

+}

+

+// SetWriter is used to set the SGR sequence with the given io.Writer. This is

+// a low-level function, and users should use the higher-level functions, such

+// as color.Fprint, color.Print, etc.

+func (c *Color) SetWriter(w io.Writer) *Color {

+ if c.isNoColorSet() {

+ return c

+ }

+

+ fmt.Fprint(w, c.format())

+ return c

+}

+

+// UnsetWriter resets all escape attributes and clears the output with the give

+// io.Writer. Usually should be called after SetWriter().

+func (c *Color) UnsetWriter(w io.Writer) {

+ if c.isNoColorSet() {

+ return

+ }

+

+ if NoColor {

+ return

+ }

+

+ fmt.Fprintf(w, "%s[%dm", escape, Reset)

+}

+

+// Add is used to chain SGR parameters. Use as many as parameters to combine

+// and create custom color objects. Example: Add(color.FgRed, color.Underline).

+func (c *Color) Add(value ...Attribute) *Color {

+ c.params = append(c.params, value...)

+ return c

+}

+

+// Fprint formats using the default formats for its operands and writes to w.

+// Spaces are added between operands when neither is a string.

+// It returns the number of bytes written and any write error encountered.

+// On Windows, users should wrap w with colorable.NewColorable() if w is of

+// type *os.File.

+func (c *Color) Fprint(w io.Writer, a ...interface{}) (n int, err error) {

+ c.SetWriter(w)

+ defer c.UnsetWriter(w)

+

+ return fmt.Fprint(w, a...)

+}

+

+// Print formats using the default formats for its operands and writes to

+// standard output. Spaces are added between operands when neither is a

+// string. It returns the number of bytes written and any write error

+// encountered. This is the standard fmt.Print() method wrapped with the given

+// color.

+func (c *Color) Print(a ...interface{}) (n int, err error) {

+ c.Set()

+ defer c.unset()

+

+ return fmt.Fprint(Output, a...)

+}

+

+// Fprintf formats according to a format specifier and writes to w.

+// It returns the number of bytes written and any write error encountered.

+// On Windows, users should wrap w with colorable.NewColorable() if w is of

+// type *os.File.

+func (c *Color) Fprintf(w io.Writer, format string, a ...interface{}) (n int, err error) {

+ c.SetWriter(w)

+ defer c.UnsetWriter(w)

+

+ return fmt.Fprintf(w, format, a...)

+}

+

+// Printf formats according to a format specifier and writes to standard output.

+// It returns the number of bytes written and any write error encountered.

+// This is the standard fmt.Printf() method wrapped with the given color.

+func (c *Color) Printf(format string, a ...interface{}) (n int, err error) {

+ c.Set()

+ defer c.unset()

+

+ return fmt.Fprintf(Output, format, a...)

+}

+

+// Fprintln formats using the default formats for its operands and writes to w.

+// Spaces are always added between operands and a newline is appended.

+// On Windows, users should wrap w with colorable.NewColorable() if w is of

+// type *os.File.

+func (c *Color) Fprintln(w io.Writer, a ...interface{}) (n int, err error) {

+ c.SetWriter(w)

+ defer c.UnsetWriter(w)

+

+ return fmt.Fprintln(w, a...)

+}

+

+// Println formats using the default formats for its operands and writes to

+// standard output. Spaces are always added between operands and a newline is

+// appended. It returns the number of bytes written and any write error

+// encountered. This is the standard fmt.Print() method wrapped with the given

+// color.

+func (c *Color) Println(a ...interface{}) (n int, err error) {

+ c.Set()

+ defer c.unset()

+

+ return fmt.Fprintln(Output, a...)

+}

+

+// Sprint is just like Print, but returns a string instead of printing it.

+func (c *Color) Sprint(a ...interface{}) string {

+ return c.wrap(fmt.Sprint(a...))

+}

+

+// Sprintln is just like Println, but returns a string instead of printing it.

+func (c *Color) Sprintln(a ...interface{}) string {

+ return c.wrap(fmt.Sprintln(a...))

+}

+

+// Sprintf is just like Printf, but returns a string instead of printing it.

+func (c *Color) Sprintf(format string, a ...interface{}) string {

+ return c.wrap(fmt.Sprintf(format, a...))

+}

+

+// FprintFunc returns a new function that prints the passed arguments as

+// colorized with color.Fprint().

+func (c *Color) FprintFunc() func(w io.Writer, a ...interface{}) {

+ return func(w io.Writer, a ...interface{}) {

+ c.Fprint(w, a...)

+ }

+}

+

+// PrintFunc returns a new function that prints the passed arguments as

+// colorized with color.Print().

+func (c *Color) PrintFunc() func(a ...interface{}) {

+ return func(a ...interface{}) {

+ c.Print(a...)

+ }

+}

+

+// FprintfFunc returns a new function that prints the passed arguments as

+// colorized with color.Fprintf().

+func (c *Color) FprintfFunc() func(w io.Writer, format string, a ...interface{}) {

+ return func(w io.Writer, format string, a ...interface{}) {

+ c.Fprintf(w, format, a...)

+ }

+}

+

+// PrintfFunc returns a new function that prints the passed arguments as

+// colorized with color.Printf().

+func (c *Color) PrintfFunc() func(format string, a ...interface{}) {

+ return func(format string, a ...interface{}) {

+ c.Printf(format, a...)

+ }

+}

+

+// FprintlnFunc returns a new function that prints the passed arguments as

+// colorized with color.Fprintln().

+func (c *Color) FprintlnFunc() func(w io.Writer, a ...interface{}) {

+ return func(w io.Writer, a ...interface{}) {

+ c.Fprintln(w, a...)

+ }

+}

+

+// PrintlnFunc returns a new function that prints the passed arguments as

+// colorized with color.Println().

+func (c *Color) PrintlnFunc() func(a ...interface{}) {

+ return func(a ...interface{}) {

+ c.Println(a...)

+ }

+}

+

+// SprintFunc returns a new function that returns colorized strings for the

+// given arguments with fmt.Sprint(). Useful to put into or mix into other

+// string. Windows users should use this in conjunction with color.Output, example:

+//

+// put := New(FgYellow).SprintFunc()

+// fmt.Fprintf(color.Output, "This is a %s", put("warning"))

+func (c *Color) SprintFunc() func(a ...interface{}) string {

+ return func(a ...interface{}) string {

+ return c.wrap(fmt.Sprint(a...))

+ }

+}

+

+// SprintfFunc returns a new function that returns colorized strings for the

+// given arguments with fmt.Sprintf(). Useful to put into or mix into other

+// string. Windows users should use this in conjunction with color.Output.

+func (c *Color) SprintfFunc() func(format string, a ...interface{}) string {

+ return func(format string, a ...interface{}) string {

+ return c.wrap(fmt.Sprintf(format, a...))

+ }

+}

+

+// SprintlnFunc returns a new function that returns colorized strings for the

+// given arguments with fmt.Sprintln(). Useful to put into or mix into other

+// string. Windows users should use this in conjunction with color.Output.

+func (c *Color) SprintlnFunc() func(a ...interface{}) string {

+ return func(a ...interface{}) string {

+ return c.wrap(fmt.Sprintln(a...))

+ }

+}

+

+// sequence returns a formatted SGR sequence to be plugged into a "\x1b[...m"

+// an example output might be: "1;36" -> bold cyan

+func (c *Color) sequence() string {

+ format := make([]string, len(c.params))

+ for i, v := range c.params {

+ format[i] = strconv.Itoa(int(v))

+ }

+

+ return strings.Join(format, ";")

+}

+

+// wrap wraps the s string with the colors attributes. The string is ready to

+// be printed.

+func (c *Color) wrap(s string) string {

+ if c.isNoColorSet() {

+ return s

+ }

+

+ return c.format() + s + c.unformat()

+}

+

+func (c *Color) format() string {

+ return fmt.Sprintf("%s[%sm", escape, c.sequence())

+}

+

+func (c *Color) unformat() string {

+ return fmt.Sprintf("%s[%dm", escape, Reset)

+}

+

+// DisableColor disables the color output. Useful to not change any existing

+// code and still being able to output. Can be used for flags like

+// "--no-color". To enable back use EnableColor() method.

+func (c *Color) DisableColor() {

+ c.noColor = boolPtr(true)

+}

+

+// EnableColor enables the color output. Use it in conjunction with

+// DisableColor(). Otherwise, this method has no side effects.

+func (c *Color) EnableColor() {

+ c.noColor = boolPtr(false)

+}

+

+func (c *Color) isNoColorSet() bool {

+ // check first if we have user set action

+ if c.noColor != nil {

+ return *c.noColor

+ }

+

+ // if not return the global option, which is disabled by default

+ return NoColor

+}

+

+// Equals returns a boolean value indicating whether two colors are equal.

+func (c *Color) Equals(c2 *Color) bool {

+ if len(c.params) != len(c2.params) {

+ return false

+ }

+

+ for _, attr := range c.params {

+ if !c2.attrExists(attr) {

+ return false

+ }

+ }

+

+ return true

+}

+

+func (c *Color) attrExists(a Attribute) bool {

+ for _, attr := range c.params {

+ if attr == a {

+ return true

+ }

+ }

+

+ return false

+}

+

+func boolPtr(v bool) *bool {

+ return &v

+}

+

+func getCachedColor(p Attribute) *Color {

+ colorsCacheMu.Lock()

+ defer colorsCacheMu.Unlock()

+

+ c, ok := colorsCache[p]

+ if !ok {

+ c = New(p)

+ colorsCache[p] = c

+ }

+

+ return c

+}

+

+func colorPrint(format string, p Attribute, a ...interface{}) {

+ c := getCachedColor(p)

+

+ if !strings.HasSuffix(format, "\n") {

+ format += "\n"

+ }

+

+ if len(a) == 0 {

+ c.Print(format)

+ } else {

+ c.Printf(format, a...)

+ }

+}

+

+func colorString(format string, p Attribute, a ...interface{}) string {

+ c := getCachedColor(p)

+

+ if len(a) == 0 {

+ return c.SprintFunc()(format)

+ }

+

+ return c.SprintfFunc()(format, a...)

+}

+

+// Black is a convenient helper function to print with black foreground. A

+// newline is appended to format by default.

+func Black(format string, a ...interface{}) { colorPrint(format, FgBlack, a...) }

+

+// Red is a convenient helper function to print with red foreground. A

+// newline is appended to format by default.

+func Red(format string, a ...interface{}) { colorPrint(format, FgRed, a...) }

+

+// Green is a convenient helper function to print with green foreground. A

+// newline is appended to format by default.

+func Green(format string, a ...interface{}) { colorPrint(format, FgGreen, a...) }

+

+// Yellow is a convenient helper function to print with yellow foreground.

+// A newline is appended to format by default.

+func Yellow(format string, a ...interface{}) { colorPrint(format, FgYellow, a...) }

+

+// Blue is a convenient helper function to print with blue foreground. A

+// newline is appended to format by default.

+func Blue(format string, a ...interface{}) { colorPrint(format, FgBlue, a...) }

+

+// Magenta is a convenient helper function to print with magenta foreground.

+// A newline is appended to format by default.

+func Magenta(format string, a ...interface{}) { colorPrint(format, FgMagenta, a...) }

+

+// Cyan is a convenient helper function to print with cyan foreground. A

+// newline is appended to format by default.

+func Cyan(format string, a ...interface{}) { colorPrint(format, FgCyan, a...) }

+

+// White is a convenient helper function to print with white foreground. A

+// newline is appended to format by default.

+func White(format string, a ...interface{}) { colorPrint(format, FgWhite, a...) }

+

+// BlackString is a convenient helper function to return a string with black

+// foreground.

+func BlackString(format string, a ...interface{}) string { return colorString(format, FgBlack, a...) }

+

+// RedString is a convenient helper function to return a string with red

+// foreground.

+func RedString(format string, a ...interface{}) string { return colorString(format, FgRed, a...) }

+

+// GreenString is a convenient helper function to return a string with green

+// foreground.

+func GreenString(format string, a ...interface{}) string { return colorString(format, FgGreen, a...) }

+

+// YellowString is a convenient helper function to return a string with yellow

+// foreground.

+func YellowString(format string, a ...interface{}) string { return colorString(format, FgYellow, a...) }

+

+// BlueString is a convenient helper function to return a string with blue

+// foreground.

+func BlueString(format string, a ...interface{}) string { return colorString(format, FgBlue, a...) }

+

+// MagentaString is a convenient helper function to return a string with magenta

+// foreground.

+func MagentaString(format string, a ...interface{}) string {

+ return colorString(format, FgMagenta, a...)

+}

+

+// CyanString is a convenient helper function to return a string with cyan

+// foreground.

+func CyanString(format string, a ...interface{}) string { return colorString(format, FgCyan, a...) }

+

+// WhiteString is a convenient helper function to return a string with white

+// foreground.

+func WhiteString(format string, a ...interface{}) string { return colorString(format, FgWhite, a...) }

+

+// HiBlack is a convenient helper function to print with hi-intensity black foreground. A

+// newline is appended to format by default.

+func HiBlack(format string, a ...interface{}) { colorPrint(format, FgHiBlack, a...) }

+

+// HiRed is a convenient helper function to print with hi-intensity red foreground. A

+// newline is appended to format by default.

+func HiRed(format string, a ...interface{}) { colorPrint(format, FgHiRed, a...) }

+

+// HiGreen is a convenient helper function to print with hi-intensity green foreground. A

+// newline is appended to format by default.

+func HiGreen(format string, a ...interface{}) { colorPrint(format, FgHiGreen, a...) }

+

+// HiYellow is a convenient helper function to print with hi-intensity yellow foreground.

+// A newline is appended to format by default.

+func HiYellow(format string, a ...interface{}) { colorPrint(format, FgHiYellow, a...) }

+

+// HiBlue is a convenient helper function to print with hi-intensity blue foreground. A

+// newline is appended to format by default.

+func HiBlue(format string, a ...interface{}) { colorPrint(format, FgHiBlue, a...) }

+

+// HiMagenta is a convenient helper function to print with hi-intensity magenta foreground.

+// A newline is appended to format by default.

+func HiMagenta(format string, a ...interface{}) { colorPrint(format, FgHiMagenta, a...) }

+

+// HiCyan is a convenient helper function to print with hi-intensity cyan foreground. A

+// newline is appended to format by default.

+func HiCyan(format string, a ...interface{}) { colorPrint(format, FgHiCyan, a...) }

+

+// HiWhite is a convenient helper function to print with hi-intensity white foreground. A

+// newline is appended to format by default.

+func HiWhite(format string, a ...interface{}) { colorPrint(format, FgHiWhite, a...) }

+

+// HiBlackString is a convenient helper function to return a string with hi-intensity black

+// foreground.

+func HiBlackString(format string, a ...interface{}) string {

+ return colorString(format, FgHiBlack, a...)

+}

+

+// HiRedString is a convenient helper function to return a string with hi-intensity red

+// foreground.

+func HiRedString(format string, a ...interface{}) string { return colorString(format, FgHiRed, a...) }

+

+// HiGreenString is a convenient helper function to return a string with hi-intensity green

+// foreground.

+func HiGreenString(format string, a ...interface{}) string {

+ return colorString(format, FgHiGreen, a...)

+}

+

+// HiYellowString is a convenient helper function to return a string with hi-intensity yellow

+// foreground.

+func HiYellowString(format string, a ...interface{}) string {

+ return colorString(format, FgHiYellow, a...)

+}

+

+// HiBlueString is a convenient helper function to return a string with hi-intensity blue

+// foreground.

+func HiBlueString(format string, a ...interface{}) string { return colorString(format, FgHiBlue, a...) }

+

+// HiMagentaString is a convenient helper function to return a string with hi-intensity magenta

+// foreground.

+func HiMagentaString(format string, a ...interface{}) string {

+ return colorString(format, FgHiMagenta, a...)

+}

+

+// HiCyanString is a convenient helper function to return a string with hi-intensity cyan

+// foreground.

+func HiCyanString(format string, a ...interface{}) string { return colorString(format, FgHiCyan, a...) }

+

+// HiWhiteString is a convenient helper function to return a string with hi-intensity white

+// foreground.

+func HiWhiteString(format string, a ...interface{}) string {

+ return colorString(format, FgHiWhite, a...)

+}

diff --git a/vendor/github.com/fatih/color/color_windows.go b/vendor/github.com/fatih/color/color_windows.go

new file mode 100644

index 00000000..be01c558

--- /dev/null

+++ b/vendor/github.com/fatih/color/color_windows.go

@@ -0,0 +1,19 @@

+package color

+

+import (

+ "os"

+

+ "golang.org/x/sys/windows"

+)

+

+func init() {

+ // Opt-in for ansi color support for current process.

+ // https://learn.microsoft.com/en-us/windows/console/console-virtual-terminal-sequences#output-sequences

+ var outMode uint32

+ out := windows.Handle(os.Stdout.Fd())

+ if err := windows.GetConsoleMode(out, &outMode); err != nil {

+ return

+ }

+ outMode |= windows.ENABLE_PROCESSED_OUTPUT | windows.ENABLE_VIRTUAL_TERMINAL_PROCESSING

+ _ = windows.SetConsoleMode(out, outMode)

+}

diff --git a/vendor/github.com/fatih/color/doc.go b/vendor/github.com/fatih/color/doc.go

new file mode 100644

index 00000000..9491ad54

--- /dev/null

+++ b/vendor/github.com/fatih/color/doc.go

@@ -0,0 +1,134 @@

+/*

+Package color is an ANSI color package to output colorized or SGR defined

+output to the standard output. The API can be used in several way, pick one

+that suits you.

+

+Use simple and default helper functions with predefined foreground colors:

+

+ color.Cyan("Prints text in cyan.")

+

+ // a newline will be appended automatically

+ color.Blue("Prints %s in blue.", "text")

+

+ // More default foreground colors..

+ color.Red("We have red")

+ color.Yellow("Yellow color too!")

+ color.Magenta("And many others ..")

+

+ // Hi-intensity colors

+ color.HiGreen("Bright green color.")

+ color.HiBlack("Bright black means gray..")

+ color.HiWhite("Shiny white color!")

+

+However, there are times when custom color mixes are required. Below are some

+examples to create custom color objects and use the print functions of each

+separate color object.

+

+ // Create a new color object

+ c := color.New(color.FgCyan).Add(color.Underline)

+ c.Println("Prints cyan text with an underline.")

+

+ // Or just add them to New()

+ d := color.New(color.FgCyan, color.Bold)